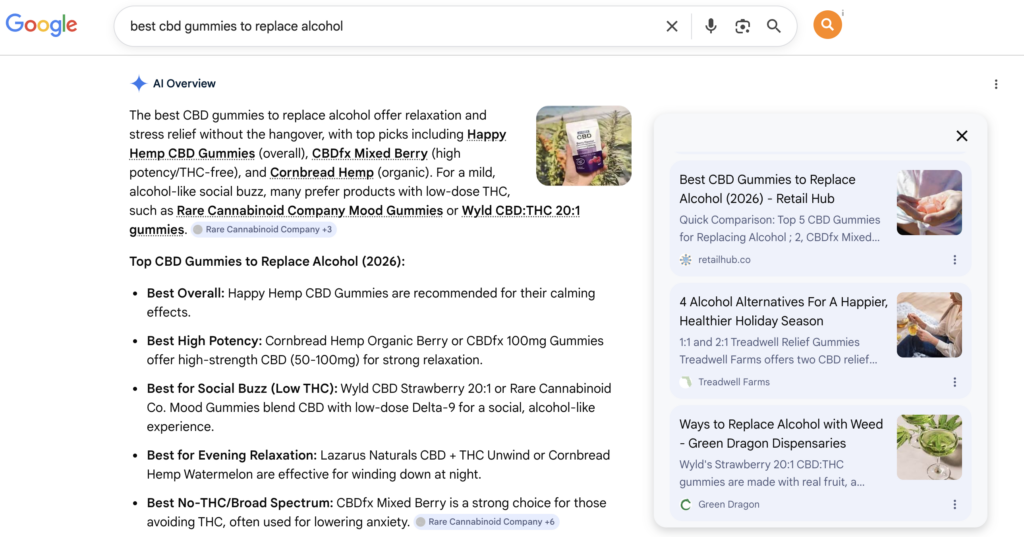

Most SEO conversations are still anchored to Google’s traditional results page. That’s understandable, but it’s increasingly incomplete. A significant and growing portion of search-like behavior now happens inside large language model interfaces, where users ask questions and receive synthesized answers that never send them to a search results page at all.

LLM SEO is the practice of optimizing your brand’s digital presence so that ChatGPT, Perplexity, Gemini, and similar tools cite your business when answering relevant queries. It’s not a replacement for traditional SEO. It’s a layer on top of it, and right now, most brands aren’t working it.

If you want your business to show up in the answers these tools generate, here’s what actually drives that.

What LLM SEO Is (and How It Differs from Traditional SEO)

Traditional SEO is about earning a high-ranking position on a search results page. LLM SEO is about earning a citation in a generated answer. The output looks different, the user experience is different, and the optimization signals are weighted differently.

When someone searches Google, they see a list of links and choose where to click. When someone asks ChatGPT or Perplexity the same question, they receive a composed answer that may or may not mention specific brands. If yours is mentioned, you get exposure. If it isn’t, you don’t exist for that query.

The underlying goal is the same as SEO has always been: be the most credible, relevant, and accessible source for your topic. But the path to that outcome runs through different signals.

How LLMs Decide Which Brands to Mention

Large language models don’t crawl the web in real time the way Google does (though some, like Perplexity, do blend live retrieval with model knowledge). Their recommendations are shaped by a combination of training data, retrieval-augmented generation, and the density of credible references to a brand across the broader web.

In practical terms, this means a few things matter a lot:

- Volume of credible mentions: The more often your brand appears in authoritative sources, the more likely a model has encountered it as a signal during training or retrieval.

- Consistency of context: Brands that are mentioned repeatedly in consistent, on-topic contexts are easier for models to associate with specific queries.

- Source quality: A mention in a high-authority publication carries more weight than one in a low-traffic blog. LLMs learn from the same web humans trust.

- Recency: For models that incorporate live retrieval, recent mentions in indexed content matter. Stale or thin presence gets deprioritized.

There’s no magic algorithm to reverse-engineer here. The discipline is about building a consistent, credible footprint across the sources these systems draw from.

Content Structure and the Signals LLMs Prefer

If you want to be cited rather than skipped, your content needs to be structured in a way that makes it easy for a language model to extract a clear, confident answer.

LLMs are trained on patterns of question-and-answer, explanation, and structured knowledge. Content that mirrors those patterns is more likely to be processed, stored, and surfaced. A few structural priorities:

- Lead with the answer. Don’t bury your main point in the third paragraph. Frontload the response to the implied query in every section.

- Use direct, declarative language. Hedged, vague writing is harder for a model to confidently excerpt. Clear assertions work better.

- Optimize for topic depth, not just keyword density. A single thorough piece that covers a subject from multiple angles builds more topical authority than several shallow posts targeting related keywords.

- FAQ sections are underrated. A well-structured FAQ maps almost perfectly onto how LLMs retrieve information. If someone asks a conversational question, a clearly formatted Q&A on your site is one of the highest-probability sources for citation.

Schema markup reinforces these signals. FAQ schema, HowTo schema, and Organization schema help AI systems understand what your content is about and who is publishing it.

The PR and Link Building Connection

This is where LLM SEO and traditional off-page SEO overlap most directly, and it’s one of the most actionable parts of the strategy.

LLMs are trained on large datasets crawled from the open web. The publications, outlets, and reference sites that appear most frequently in those datasets carry the most weight. Getting your brand mentioned in those sources, with context that connects you to your core topics, creates the kind of signal these models register.

PR article placements in high-authority publications accomplish two things simultaneously: they build the link equity that supports your traditional SEO, and they create indexed references that contribute to your LLM citation footprint. One piece of content, two categories of durable return.

Platforms like Retail Hub let you browse PR placements and AI search visibility packages filtered by domain authority and topical relevance, so you’re putting your brand in front of the sources that actually feed into LLM training and retrieval rather than buying bulk placements on low-signal sites.

Brand Entity Optimization: Getting LLMs to Know Who You Are

One of the more underappreciated aspects of LLM SEO is entity optimization. LLMs think in entities: people, companies, products, concepts. The clearer and more consistent your brand’s entity profile across the web, the more confidently a model can associate your business with relevant topics.

A few things that strengthen your entity footprint:

- Wikipedia or Wikidata presence: These are high-trust sources that appear heavily in training data. If your brand qualifies, a well-maintained entry matters.

- Consistent NAP data: Name, address, and phone consistency across directories and citation sources reinforces your entity identity.

- Third-party brand mentions with context: A mention that describes what your brand does in specific terms gives a model much more to work with than a bare hyperlink or name drop.

- Founder and leadership presence: Individual expertise signals often flow back to brand authority. A founder quoted in industry publications builds entity credibility for the company.

Think of this less as a checklist and more as a long-term brand clarity project. The cleaner and more consistent your brand’s identity across the web, the easier it is for any system, human or machine, to understand and trust what you do.

Measuring LLM SEO Performance

Measurement here is less mature than traditional SEO, but it’s not impossible. The main approaches practitioners are using right now include direct query testing, brand mention monitoring, and referral traffic from AI sources.

Direct query testing means regularly prompting ChatGPT, Perplexity, Gemini, and similar tools with the questions your customers are likely to ask and auditing whether your brand appears in the responses. It’s manual and imperfect, but it gives you a baseline and a way to track progress over time.

Brand mention monitoring through tools like Google Alerts or more sophisticated listening platforms can surface new citations in indexed content that may feed into model retrieval. And if you’re seeing traffic from referral sources labeled as ChatGPT.com or Perplexity.ai in your analytics, that’s a direct signal your content is being cited and driving clicks.

This measurement layer will improve as the space matures. The teams investing in LLM SEO now will also be better positioned to use those analytics when they arrive.

Conclusion

LLM SEO isn’t a separate strategy from good digital marketing. It’s what happens when you take the fundamentals seriously: authoritative content, credible external mentions, clear brand identity, and a presence in the sources that matter. The difference is that the payoff now includes citation in AI-generated answers, not just a position on a results page.

If you want to accelerate your brand’s visibility in ChatGPT, Perplexity, Gemini, and Google AI Overviews, Retail Hub offers PR placements and AI search visibility packages built specifically for this kind of strategy. Browse packages at Retail Hub.